How Do I Know When a Web Indexing Batch Is Reviewed

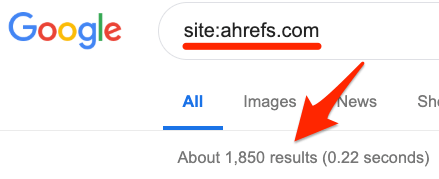

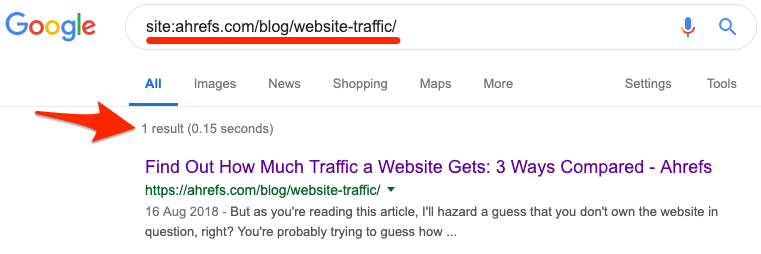

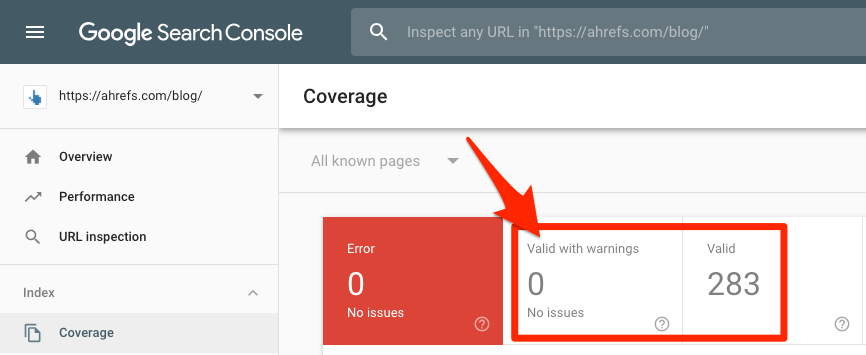

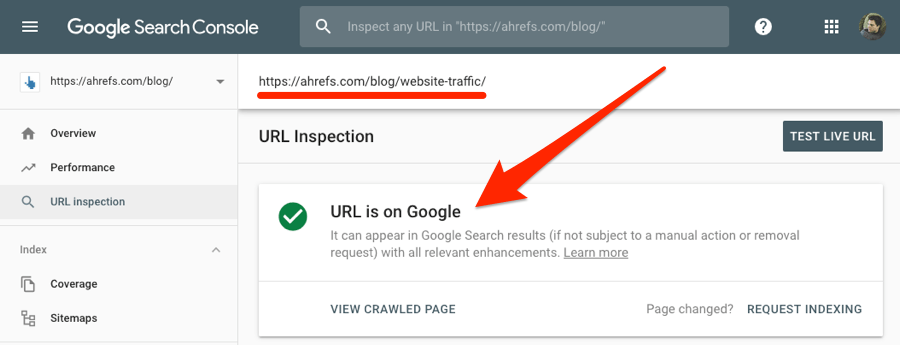

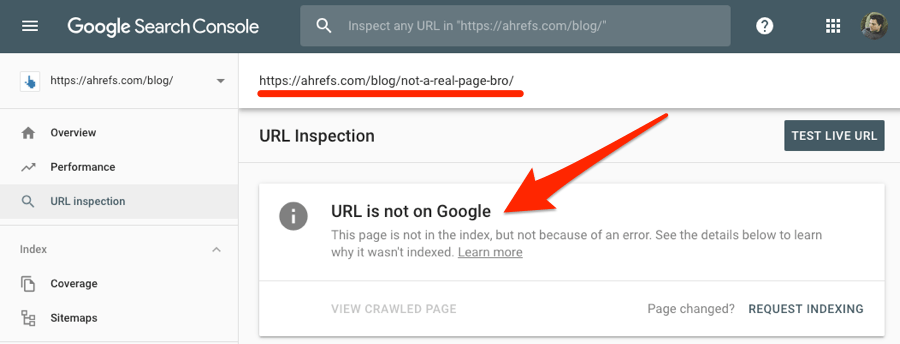

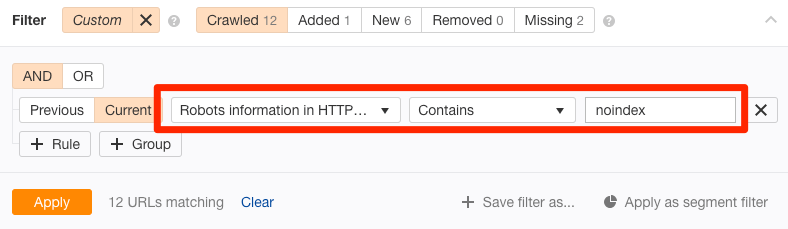

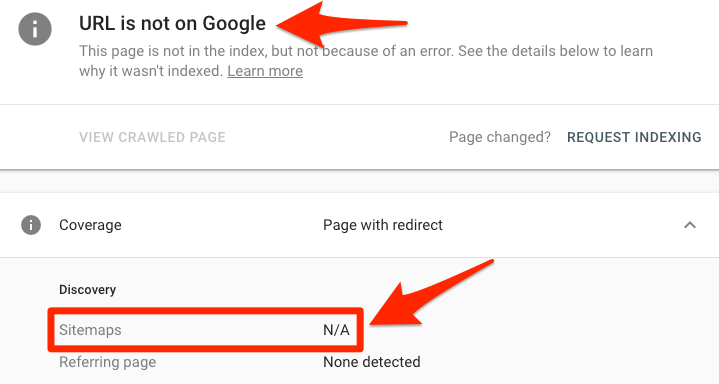

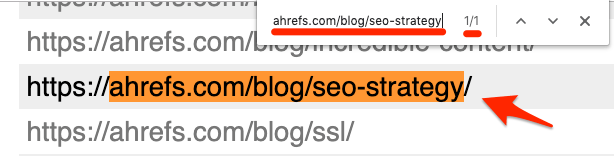

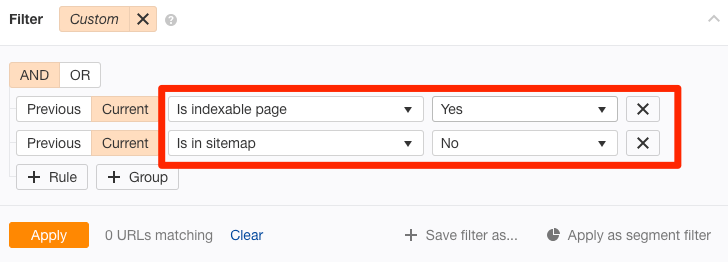

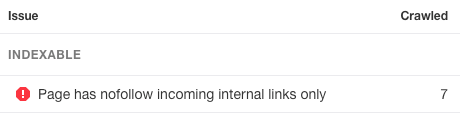

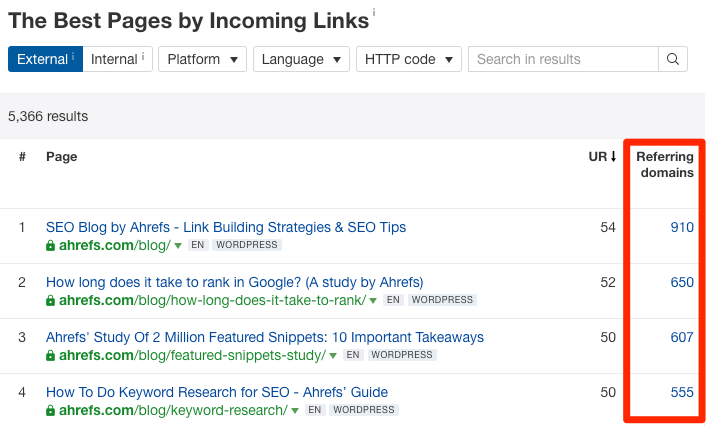

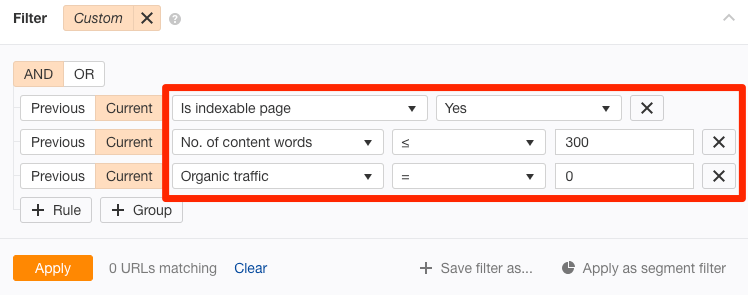

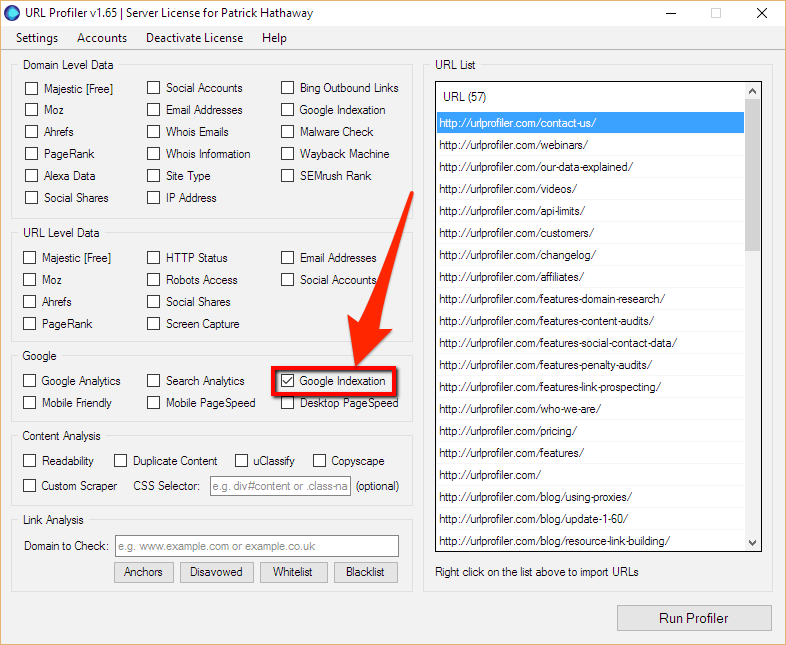

If Google doesn't index your website, then you're pretty much invisible. You won't show up for any search queries, and yous won't become any organic traffic whatever. Naught. Nada. Nix. Given that you're hither, I'm guessing this isn't news to you. So let'southward get straight downwards to business. This commodity teaches you how to fix any of these three problems: But outset, let'south make certain nosotros're on the aforementioned page and fully-sympathize this indexing malarkey. New to SEO? Check out our Google discovers new web pages past crawling the web, and so they add those pages to their alphabetize. They do this using a web spider called Googlebot. Confused? Let's ascertain a few fundamental terms. Here's a video from Google that explains the process in more item: https://www.youtube.com/lookout man?v=BNHR6IQJGZs When you Google something, y'all're asking Google to return all relevant pages from their index. Because in that location are often millions of pages that fit the nib, Google's ranking algorithm does its best to sort the pages so that you come across the all-time and most relevant results first. The critical point I'm making hither is that indexing and ranking are ii different things. Indexing is showing upward for the race; ranking is winning. You can't win without showing upwards for the race in the starting time place. Go to Google, and then search for This number shows roughly how many of your pages Google has indexed. If you desire to check the index status of a specific URL, use the same No results volition bear witness upwardly if the page isn't indexed. Now, information technology's worth noting that if you're a Google Search Panel user, you can utilize the Coverage report to get a more accurate insight into the alphabetize status of your website. Just become to: Google Search Console > Alphabetize > Coverage Look at the number of valid pages (with and without warnings). If these two numbers total anything but zip, and then Google has at least some of the pages on your website indexed. If non, then yous have a astringent trouble because none of your web pages are indexed. Sidenote. Not a Google Search Console user? Sign upwardly. It's gratis. Everyone who runs a website and cares about getting traffic from Google should apply Google Search Panel. Information technology's that important. You can also apply Search Console to cheque whether a specific page is indexed. To practise that, paste the URL into the URL Inspection tool. If that folio is indexed, information technology'll say "URL is on Google." If the page isn't indexed, you'll see the words "URL is not on Google." Found that your website or spider web folio isn't indexed in Google? Attempt this: This procedure is good practice when yous publish a new mail or page. You're finer telling Google that you've added something new to your site and that they should accept a look at it. However, requesting indexing is unlikely to solve underlying problems preventing Google from indexing sometime pages. If that's the example, follow the checklist beneath to diagnose and fix the problem. Here are some quick links to each tactic—in example you lot've already tried some: Is Google not indexing your entire website? It could exist due to a crawl block in something chosen a robots.txt file. To bank check for this result, get to yourdomain.com/robots.txt. Look for either of these ii snippets of code: Both of these tell Googlebot that they're not allowed to crawl whatsoever pages on your site. To set up the event, remove them. It'south that uncomplicated. A crawl block in robots.txt could also be the culprit if Google isn't indexing a single web page. To bank check if this is the example, paste the URL into the URL inspection tool in Google Search Console. Click on the Coverage block to reveal more details, and then await for the "Crawl immune? No: blocked past robots.txt" fault. This indicates that the folio is blocked in robots.txt. If that's the instance, recheck your robots.txt file for any "disallow" rules relating to the page or related subsection. Remove where necessary. Google won't index pages if you tell them not to. This is useful for keeping some spider web pages individual. There are two ways to do it: Pages with either of these meta tags in their This is a meta robots tag, and it tells search engines whether they tin can or can't index the page. Sidenote. The primal role is the "noindex" value. If y'all run into that, and then the page is prepare to noindex. To notice all pages with a noindex meta tag on your site, run a crawl with Ahrefs' Site Inspect. Become to the Indexability report. Look for "Noindex folio" warnings. Click through to run into all affected pages. Remove the noindex meta tag from any pages where information technology doesn't belong. Crawlers besides respect the X‑Robots-Tag HTTP response header. Y'all can implement this using a server-side scripting language like PHP, or in your .htaccess file, or past changing your server configuration. The URL inspection tool in Search Console tells yous whether Google is blocked from itch a folio because of this header. Only enter your URL, and so look for the "Indexing immune? No: 'noindex' detected in '10‑Robots-Tag' http header" If you desire to check for this issue across your site, run a crawl in Ahrefs' Site Inspect tool, and so use the "Robots information in HTTP header" filter in the Page Explorer: Tell your developer to exclude pages you want indexing from returning this header. Recommended reading: Robots meta tag and X‑Robots-Tag HTTP header specifications A sitemap tells Google which pages on your site are important, and which aren't. It may also requite some guidance on how often they should exist re-crawled. Google should be able to find pages on your website regardless of whether they're in your sitemap, but information technology's all the same good practice to include them. After all, in that location's no point making Google's life difficult. To check if a folio is in your sitemap, utilize the URL inspection tool in Search Console. If you see the "URL is not on Google" fault and "Sitemap: N/A," and then it isn't in your sitemap or indexed. Non using Search Console? Head to your sitemap URL—usually, yourdomain.com/sitemap.xml—and search for the page. Or, if you want to find all the crawlable and indexable pages that aren't in your sitemap, run a clamber in Ahrefs' Site Audit. Go to Page Explorer and apply these filters: These pages should be in your sitemap, so add together them. Once washed, permit Google know that you've updated your sitemap by pinging this URL: Supervene upon that final role with your sitemap URL. You should then run across something like this: That should speed upward Google's indexing of the page. A canonical tag tells Google which is the preferred version of a folio. It looks something like this: Most pages either have no approved tag, or what's chosen a self-referencing canonical tag. That tells Google the page itself is the preferred and probably the just version. In other words, y'all want this page to exist indexed. Simply if your folio has a rogue canonical tag, then it could be telling Google about a preferred version of this page that doesn't exist. In which case, your page won't get indexed. To bank check for a approved, use Google'due south URL inspection tool. Yous'll come across an "Alternating page with approved tag" alert if the canonical points to another page. If this shouldn't be there, and you want to index the page, remove the canonical tag. Of import Approved tags aren't always bad. Most pages with these tags will have them for a reason. If you lot see that your page has a canonical gear up, then check the canonical page. If this is indeed the preferred version of the page, and there's no need to index the page in question also, then the canonical tag should stay. If you desire a quick fashion to find rogue canonical tags across your unabridged site, run a clamber in Ahrefs' Site Inspect tool. Get to the Folio Explorer. Use these settings: This looks for pages in your sitemap with non-self-referencing canonical tags. Because yous almost certainly want to alphabetize the pages in your sitemap, y'all should investigate farther if this filter returns any results. It'south highly likely that these pages either take a rogue canonical or shouldn't be in your sitemap in the first place. Orphan pages are those without internal links pointing to them. Because Google discovers new content by itch the web, they're unable to discover orphan pages through that process. Website visitors won't be able to observe them either. To bank check for orphan pages, clamber your site with Ahrefs' Site Audit. Adjacent, check the Links report for "Orphan folio (has no incoming internal links)" errors: This shows all pages that are both indexable and present in your sitemap, still have no internal links pointing to them. IMPORTANT This process only works when ii things are true: Not confident that all the pages you want to be indexed are in your sitemap? Try this: Whatsoever URLs not found during the crawl are orphan pages. You can fix orphan pages in one of two ways: Nofollow links are links with a rel="nofollow" tag. They prevent the transfer of PageRank to the destination URL. Google too doesn't crawl nofollow links. Here'south what Google says almost the matter: Essentially, using nofollow causes us to driblet the target links from our overall graph of the web. Even so, the target pages may still announced in our index if other sites link to them without using nofollow, or if the URLs are submitted to Google in a Sitemap. In short, you should brand sure that all internal links to indexable pages are followed. To exercise this, apply Ahrefs' Site Inspect tool to crawl your site. Check the Links written report for indexable pages with "Page has nofollow incoming internal links only" errors: Remove the nofollow tag from these internal links, assuming that you want Google to index the folio. If non, either delete the page or noindex it. Recommended reading: What Is a Nofollow Link? Everything You lot Demand to Know (No Jargon!) Google discovers new content past crawling your website. If you fail to internally link to the folio in question then they may non be able to detect it. 1 easy solution to this problem is to add some internal links to the page. You can practise that from whatsoever other web folio that Google can crawl and index. Still, if you want Google to index the page as fast as possible, information technology makes sense to exercise so from 1 of your more "powerful" pages. Why? Because Google is likely to recrawl such pages faster than less important pages. To do this, head over to Ahrefs' Site Explorer, enter your domain, then visit the All-time past links report. This shows all the pages on your website sorted past URL Rating (UR). In other words, information technology shows the nigh authoritative pages first. Skim this list and look for relevant pages from which to add internal links to the page in question. For example, if we were looking to add together an internal link to our guest posting guide, our link building guide would probably offering a relevant place from which to practice then. And that page just so happens to be the 11th almost authoritative page on our blog: Google volition then meet and follow that link next time they recrawl the page. pro tip Paste the page from which you added the internal link into Google's URL inspection tool. Hit the "Request indexing" button to permit Google know that something on the folio has inverse and that they should recrawl it every bit before long as possible. This may speed upwardly the process of them discovering the internal link and consequently, the page you want indexing. Google is unlikely to index low-quality pages because they hold no value for its users. Here'due south what Google's John Mueller said about indexing in 2018: We never alphabetize all known URLs, that's pretty normal. I'd focus on making the site crawly and inspiring, so things usually work out better. — 🍌 John 🍌 (@JohnMu) January 3, 2018 He implies that if you desire Google to index your website or spider web folio, information technology needs to be "awesome and inspiring." If y'all've ruled out technical problems for the lack of indexing, then a lack of value could exist the culprit. For that reason, it's worth reviewing the page with fresh eyes and asking yourself: Is this page genuinely valuable? Would a user observe value in this page if they clicked on it from the search results? If the answer is no to either of those questions, and then you need to improve your content. You can find more potentially depression-quality pages that aren't indexed using Ahrefs' Site Audit tool and URL Profiler. To do that, go to Folio Explorer in Ahrefs' Site Audit and employ these settings: This will render "sparse" pages that are indexable and currently get no organic traffic. In other words, at that place'due south a decent adventure they aren't indexed. Export the report, then paste all the URLs into URL Profiler and run a Google Indexation check. IMPORTANT It'south recommended to use proxies if you're doing this for lots of pages (i.east., over 100). Otherwise, you run the risk of your IP getting banned past Google. If you tin can't do that, so another culling is to search Google for a "free majority Google indexation checker." There are a few of these tools around, just well-nigh of them are limited to <25 pages at a time. Check any non-indexed pages for quality issues. Ameliorate where necessary, then request reindexing in Google Search Console. You should besides aim to set up issues with duplicate content. Google is unlikely to alphabetize duplicate or virtually-duplicate pages. Use the Indistinguishable content report in Site Audit to check for these problems. Having likewise many low-quality pages on your website serves merely to waste crawl budget. Here's what Google says on the affair: Wasting server resources on [low-value-add pages] will drain crawl activity from pages that do actually accept value, which may cause a significant filibuster in discovering great content on a site. Think of it like a teacher grading essays, one of which is yours. If they have 10 essays to grade, they're going to get to yours quite quickly. If they have a hundred, information technology'll take them a bit longer. If they have thousands, their workload is too high, and they may never get around to grading your essay. Google does state that "crawl budget […] is not something well-nigh publishers have to worry about," and that "if a site has fewer than a few k URLs, almost of the fourth dimension it will exist crawled efficiently." Still, removing low-quality pages from your website is never a bad affair. It can only take a positive outcome on crawl budget. You can use our content audit template to find potentially low-quality and irrelevant pages that can exist deleted. Backlinks tell Google that a web folio is important. Afterward all, if someone is linking to it, then information technology must hold some value. These are pages that Google wants to index. For total transparency, Google doesn't only index web pages with backlinks. In that location are plenty (billions) of indexed pages with no backlinks. However, because Google sees pages with loftier-quality links as more than important, they're likely to crawl—and re-clamber—such pages faster than those without. That leads to faster indexing. We have plenty of resources on building high-quality backlinks on the blog. Accept a wait at a few of the guides beneath. Having your website or web page indexed in Google doesn't equate to rankings or traffic. They're ii different things. Indexing means that Google is aware of your website. It doesn't hateful they're going to rank information technology for any relevant and worthwhile queries. That's where SEO comes in—the art of optimizing your web pages to rank for specific queries. In curt, SEO involves: Here's a video to get you started with SEO: https://www.youtube.com/watch?v=DvwS7cV9GmQ … and some manufactures: In that location are only ii possible reasons why Google isn't indexing your website or web page: It's entirely possible that both of those issues exist. Yet, I would say that technical problems are far more than common. Technical problems tin can likewise atomic number 82 to the automobile-generation of indexable depression-quality content (e.g., problems with faceted navigation). That isn't good. Still, running through the checklist to a higher place should solve the indexation issue nine times out of ten. Just recollect that indexing ≠ ranking. SEO is nevertheless vital if you want to rank for whatsoever worthwhile search queries and concenter a constant stream of organic traffic.

site:yourwebsite.com

site:yourwebsite.com/spider web-page-slug operator.

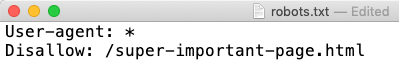

one) Remove clamber blocks in your robots.txt file

User-agent: Googlebot Disallow: /

User-amanuensis: * Disallow: /

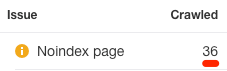

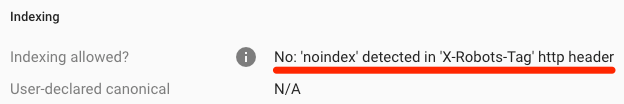

ii) Remove rogue noindex tags

Method 1: meta tag

<head> section won't be indexed by Google:<meta name="robots" content="noindex">

<meta name="googlebot" content="noindex">

Method 2: X‑Robots-Tag

3) Include the page in your sitemap

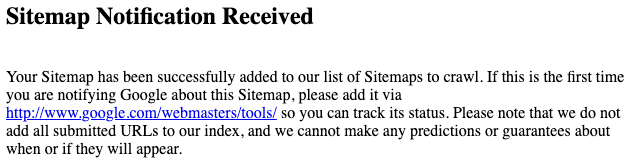

http://www.google.com/ping?sitemap=http://yourwebsite.com/sitemap_url.xml

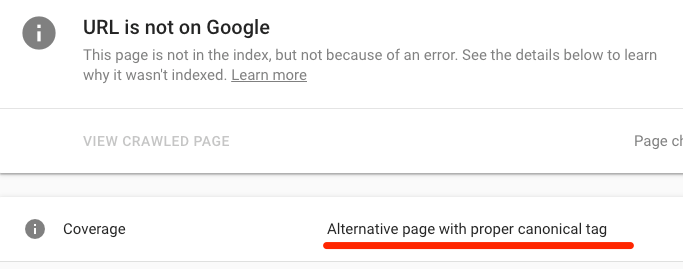

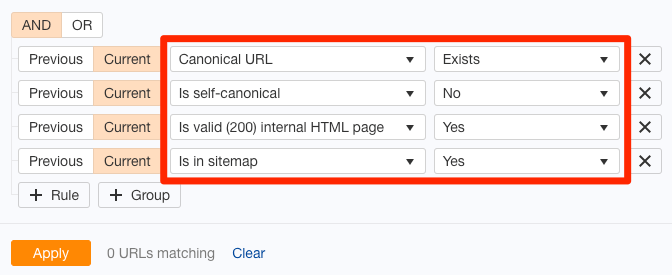

4) Remove rogue approved tags

<link rel="canonical" href="/page.html/">

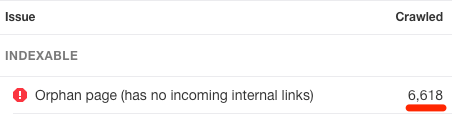

5) Check that the folio isn't orphaned

half dozen) Gear up nofollow internal links

7) Add "powerful" internal links

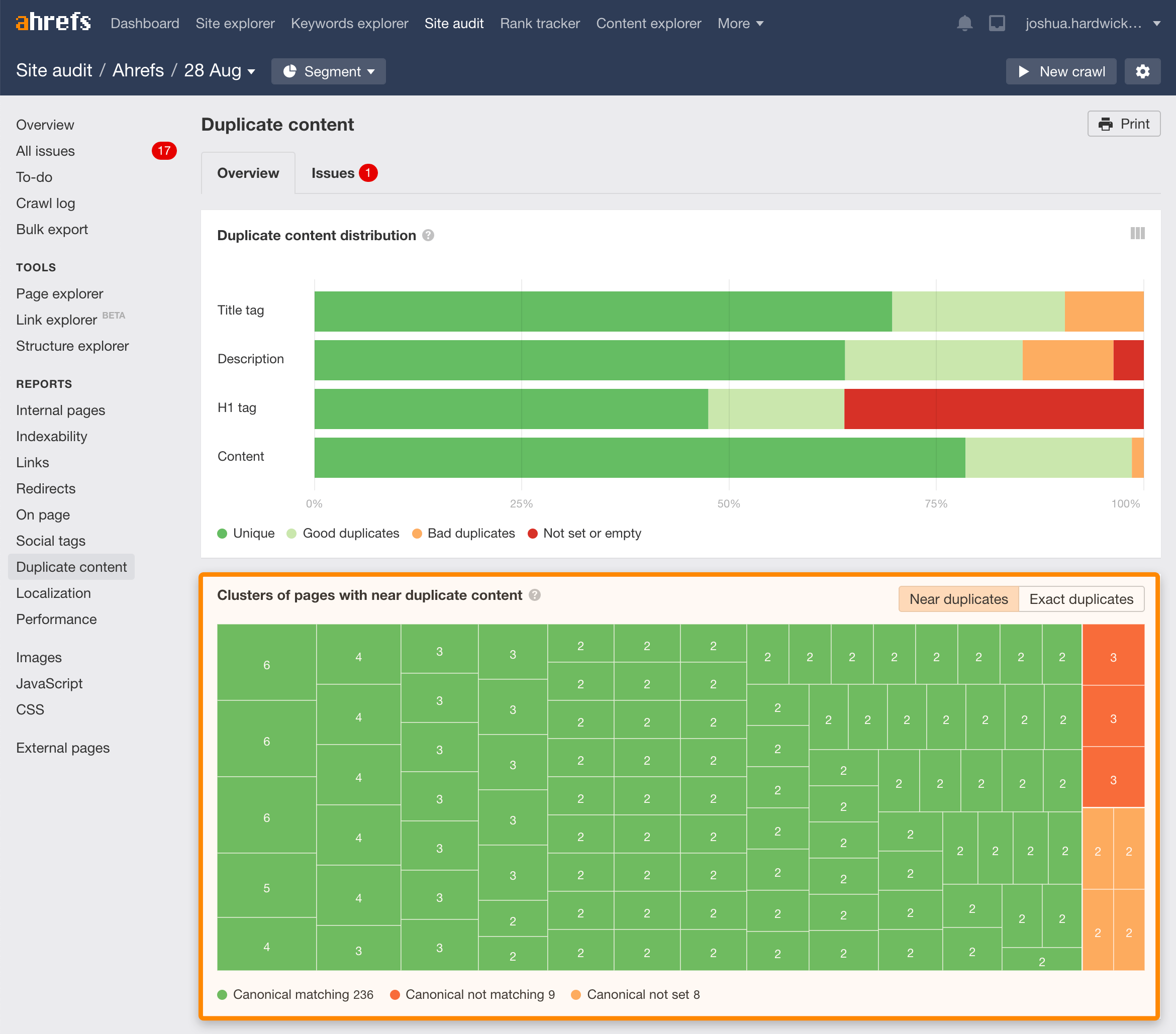

8) Make certain the page is valuable and unique

nine) Remove low-quality pages (to optimize "crawl budget")

x) Build high-quality backlinks

Indexing ≠ ranking

Last thoughts

mendozahavendecked.blogspot.com

Source: https://ahrefs.com/blog/google-index/

0 Response to "How Do I Know When a Web Indexing Batch Is Reviewed"

Postar um comentário